Custom AI Chips and the Developer Ecosystem - Part 1

Exploring How Hyperscaler Silicon Innovation Could Reshape Developer Economics

Introduction

For years, cloud hyperscalers have been investing heavily in designing custom chips—both CPUs and accelerators—tailored specifically for their applications and workloads. This trend has produced notable success stories: Google's Tensor Processing Units (TPUs), Meta's Training and Inference Accelerator (MTIA), and AWS's Inferentia chips have all demonstrated significant advantages over standard solutions. Multiple industry sources indicate that deploying these custom chips (also called ASICs – application specific integrated circuits) can reduce costs by approximately 40% compared to using GPUs from traditional vendors like NVIDIA.

While these developments clearly benefit hyperscalers by reducing their Total Cost of Ownership (TCO), a critical question emerges: Do these benefits meaningfully extend to developers and startups, potentially offering them greater flexibility and efficiency when deploying across different systems?

This article—the first in a series—explores how the chip customization trend among hyperscalers might impact the broader ecosystem of developers and startups, particularly those with limited resources. We investigate the technical and financial considerations that determine whether smaller players can leverage custom silicon to achieve deployment flexibility that remains elusive with current GPU solutions. Looking further ahead, we consider when developers might be able to create their own custom chips, and how long it will take for AI developers to make the leap from code to custom System-on-Chip (SoC) designs.

The AI Computing Spectrum: Different Needs for Different Profiles

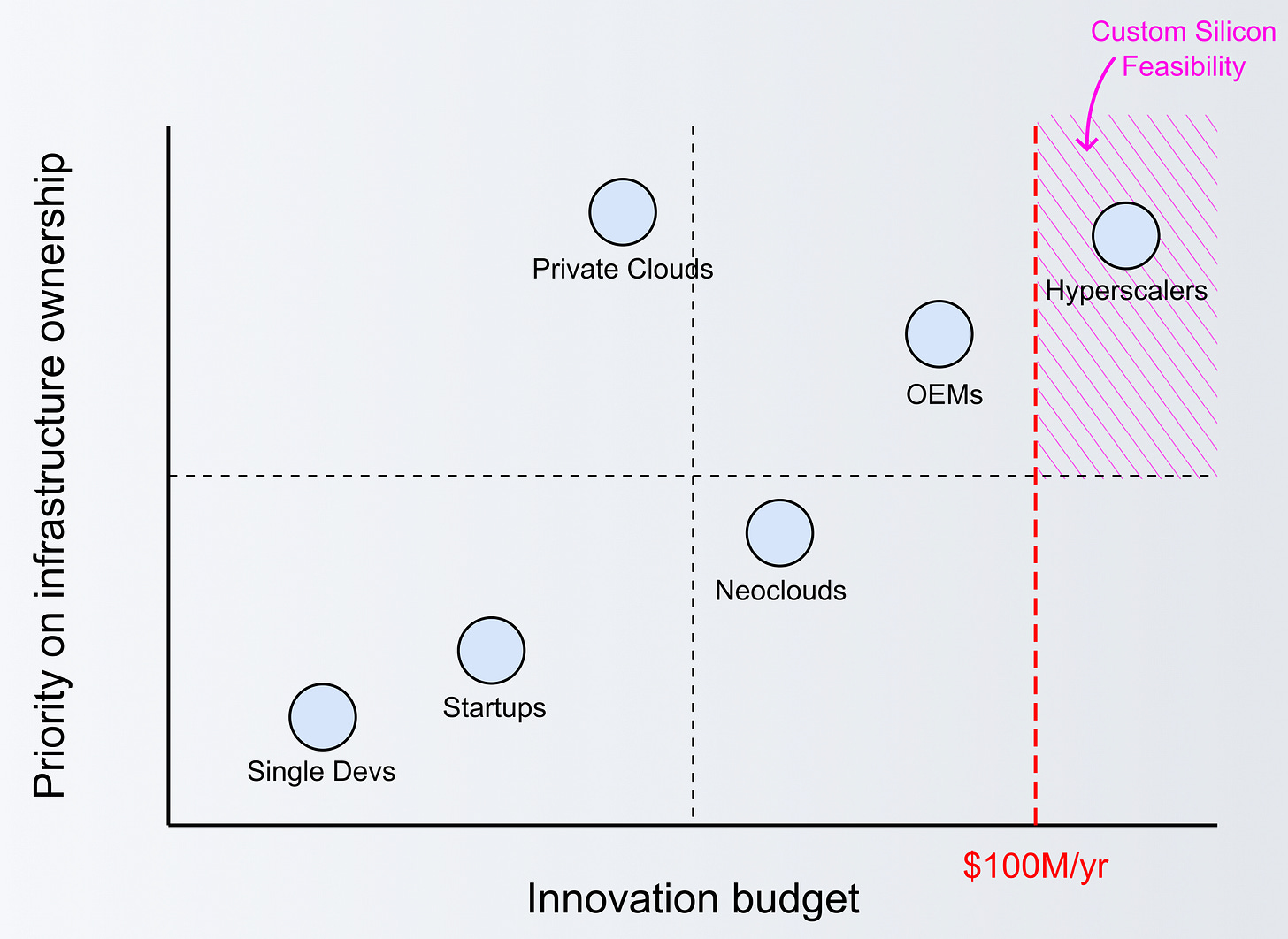

Despite significant advances in chip design over the past two decades, creating a custom chip remains an expensive proposition. The non-recurring expenses (NRE) for such projects typically start around $50 million—a prohibitive barrier for most organizations outside the hyperscaler category. Considering additional costs related to taking the product to market, this figure can go over $100M (the custom-chip threshold considered herein). To understand whether developers can truly benefit from custom chips and accelerators, we need to examine the varying profiles of companies and their distinct motivations.

Individual Developers and Researchers

For individual developers and researchers, the primary concerns revolve around monthly costs, usability, and compatibility. They typically aren't deeply concerned with memory capacity, bandwidth, or the specific details of a system or platform—they simply need to prove concepts work, and at a low cost. These users seek flexible infrastructure and a common hardware/software platform that scales across different development stages.

Custom chips would be attractive to this segment if they provided substantial computing capacity at a significantly lower price point than current options. However, individual developers must carefully assess the risks of deviating from standard solutions like those from NVIDIA or AMD, which offer established ecosystems and broad compatibility.

Small Teams & Startups

Small teams and early-stage startups face the challenge of balancing innovation with practical constraints. They typically can't afford to develop their own hardware approaches unless exceptionally well-funded, and they have little time to spare on infrastructure customization. While they may be somewhat more concerned about deployment platform specifics than individual developers, they generally prioritize solutions that are easier and more economical to use.

For these organizations, ecosystem compatibility remains paramount—adopting technologies that are incompatible with mainstream development tools and libraries can significantly hamper productivity and time-to-market.

OEMs & Boutique System Builders

Original Equipment Manufacturers (OEMs) and boutique system builders represent the first category with strong motivations to seriously consider designing or leveraging custom chips. These companies need to create opportunities for differentiation in the marketplace, and typically design for very specific scenarios. A great example is Apple – their vertical integration strategy and their massive R&D budget allowed them to design their own chips, culminating in a new series of computers powered by their M chips, offering superior power and thermal efficiency (in a nutshell, the battery of Apple computers with M chips can last 4x longer than an Apple with an Intel processor).

For most OEMs, a custom chip could save their clients considerable money, potentially becoming a competitive advantage. However, this approach requires substantial upfront investment, presenting a significant barrier even for established system builders. These companies must also navigate the challenges of scaling custom solutions beyond niche applications.

Private Clouds and Neoclouds

Private clouds and "neoclouds" occupy a middle ground between hyperscalers and smaller entities. These organizations compete directly with hyperscalers but differentiate by focusing on specific capabilities—for instance, specializing in GPU provisioning rather than offering diverse solutions.

This specialization can make them approximately 60% more cost-effective than traditional cloud providers when serving GPUs. However, beyond lower prices and potentially easier access to the latest hardware, neoclouds struggle to find additional competitive advantages. They remain dependent on the same chip vendors used by hyperscalers and must compete for access to high-demand components like GPUs, which often have extended lead times of six months or more.

To maintain operational efficiency, neoclouds can't afford to support legacy chips and architectures that enterprises often require for continued support of their production workloads. This creates some tension, as enterprises typically resist redesigning their systems every few years due to the difficulty and cost involved.

Neoclouds could find new ways to compete with hyperscalers if they could:

Help enterprise clients easily upgrade deployments from previous generations to new ones

Fit more users into existing systems without performance degradation

Design custom chips addressing their specific datacenter needs while eliminating components that don't contribute positively to their TCO

However, the $100 million entry point for custom chip design remains prohibitive for most players in this category.

Hyperscalers

Today, hyperscalers (e.g. AWS, GCP, Azure) are the only group inside the custom-silicon feasibility region. They have enough resources to start a chip design project and, once they deploy the chip into their data centers, they can prompt collect the benefits by reducing in 40% the costs of running their own workloads.

For hyperscalers, the calculation extends beyond immediate costs to long-term TCO considerations, including real estate and operational expenses. At their scale, custom silicon makes financial sense despite the enormous upfront investment, as the benefits can be amortized across millions of workloads.

Additionally, possessing their own custom silicon can give them leverage to negotiate with AI chip makers (e.g. Nvidia, Intel, AMD) for better deals when purchasing their systems to outfit new datacenters.

The Developer's Perspective

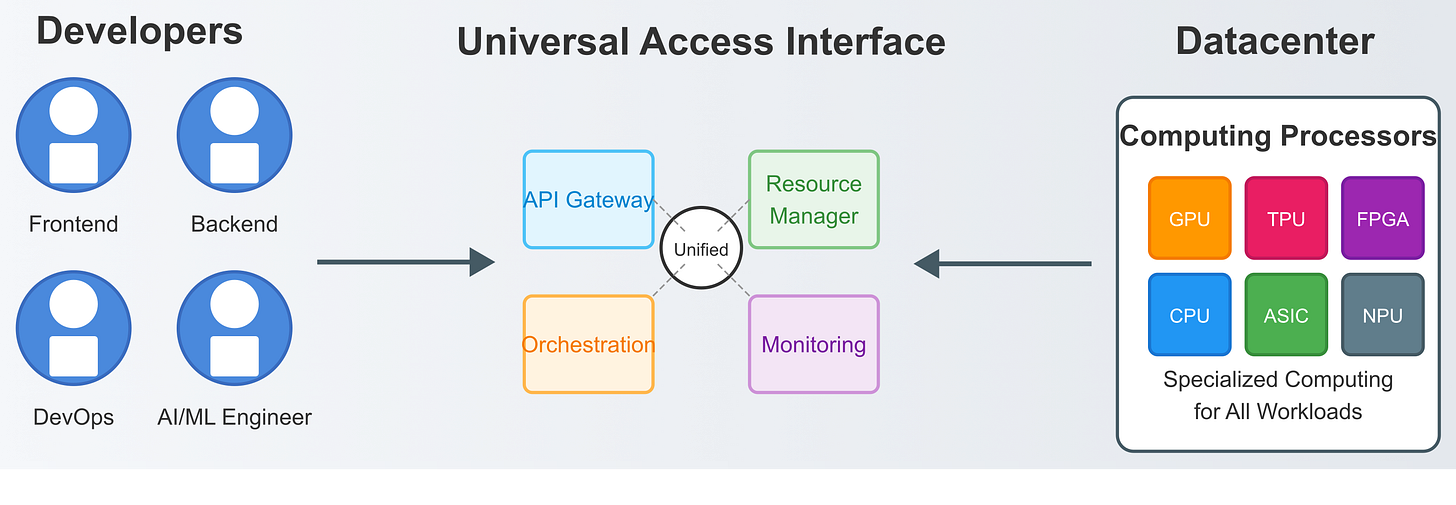

From a developer's standpoint, several factors take precedence when evaluating computing platforms:

What Matters Most to Developers?

Ease of use and development environment: Tools and frameworks that streamline workflow

Community support and available resources: Documentation, examples, and problem-solving communities

Cost structures: Balancing monthly/hourly operational costs against capital expenditures

Flexibility: The ability to adapt to evolving AI models and techniques (recognizing that GPUs, while versatile, aren't ideal for all AI workloads)

Are Hyperscalers' Custom Chips Attractive for Developers?

In principle, yes—but with significant caveats. For hyperscalers' custom chips to gain traction with the developer community:

They must offer comprehensive SDKs and libraries that developers can integrate with their existing code, enabling straightforward interfacing with the custom chips

Without proper developer tooling, even the most impressive custom silicon will sit underutilized, as developers across all segments will lack motivation to adopt them

NVIDIA's continued dominance in AI development owes much to their developer ecosystem. We are talking about CUDA, the powerful library introduced in 2006 that enables developers to interface their code and optimize it to run on Nvidia’s hardware. Creating a comparable development environment for custom chips requires substantial financial investment and years of ongoing development. Even after achieving technical parity, hyperscalers face the challenge of convincing developers to adopt their new platforms—no small feat given established workflows and institutional knowledge.

Potential Approaches

For hyperscalers seeking to expand the adoption of their custom chips beyond internal workloads, translation layers represent a promising strategy. These would help developers convert code originally written for specific vendors (typically NVIDIA) into formats compatible with hyperscalers' custom chips.

This approach parallels multi-cloud strategies, where companies use different cloud providers for different purposes. Similarly, developers could leverage different chips for different workloads, but this becomes practical only when they don't need to extensively refactor their code for each target platform.

Looking Ahead

Understanding the economics of custom silicon is crucial for projecting how this landscape might evolve. As we continue this series, we'll break down these economics in detail, clarifying the current state and exploring how the barriers to entry might gradually lower, potentially making custom chips economically viable for organizations beyond hyperscalers.

For developers and startups today, the path forward involves carefully weighing the benefits of custom solutions against the practical realities of development resources, time constraints, and ecosystem compatibility. While the promise of hyperscaler-derived benefits is enticing, the current landscape suggests that most smaller players will continue to rely on standardized solutions for the immediate future—while keeping a close eye on evolving opportunities in the custom silicon space.

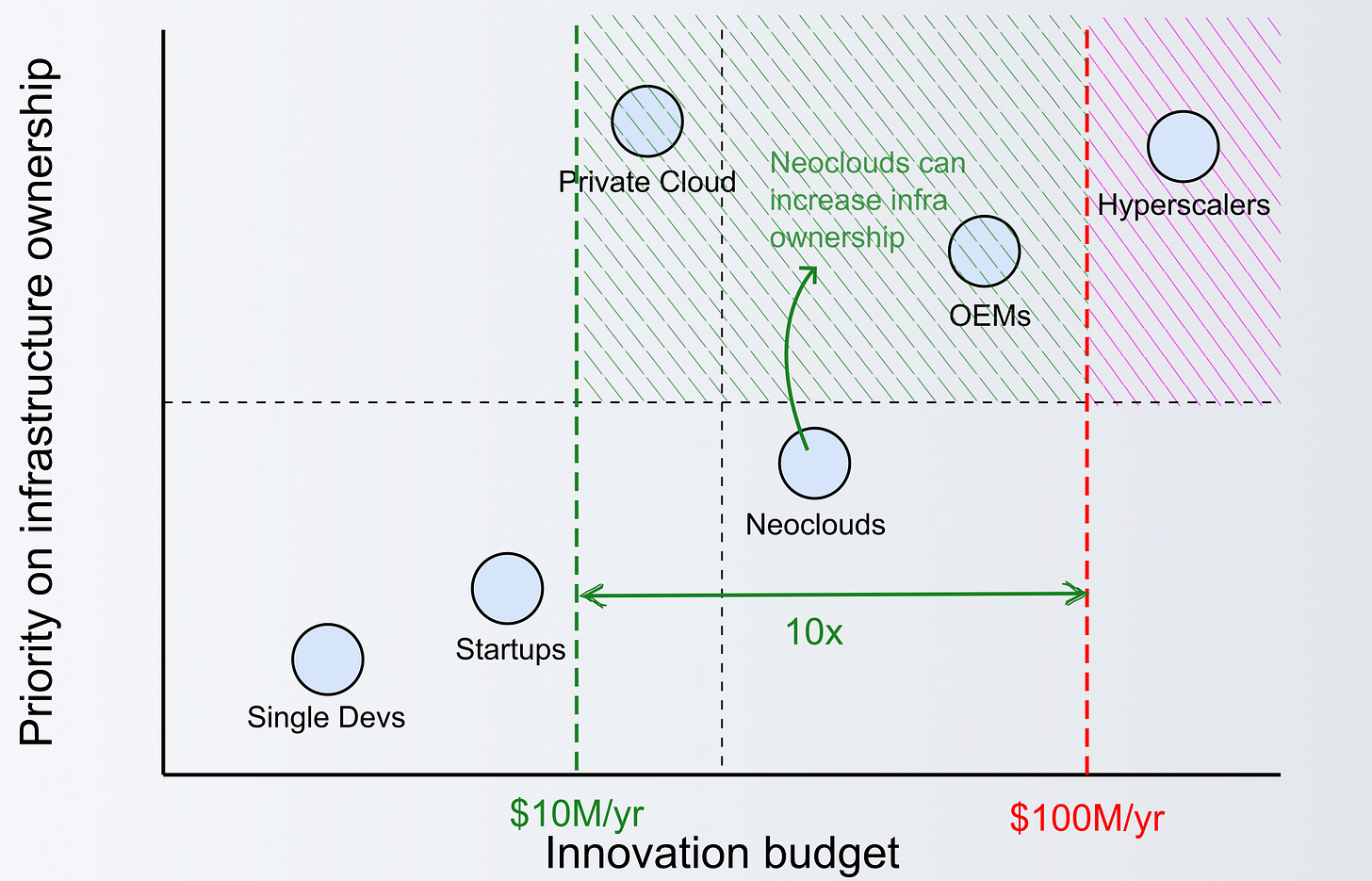

In the following articles, we will jump into the scenario depicted in the image below.

We are going to try and answer the following questions:

What does the world look like if custom-chip design gets 10x cheaper (from $100M to $10M), allowing for neoclouds to consider creating their own processors?

What is the practical impact on the way developers deploy their applications?

How chiplets can become the main enabler of a new age of custom processors?

This article is the first in a series exploring the implications of custom chip development for the broader technology ecosystem. In subsequent installments, we'll delve deeper into the economics of custom silicon, examine specific use cases, and project future trends in this rapidly evolving domain.